For many organizations, the first chapter of AI adoption looked the same: a few isolated pilots, a handful of innovation teams, and a lot of excitement without a clear path to scale. The next chapter is different. It is not about whether AI works. It is about whether AI can be democratized across the enterprise in a way that is secure, governed, practical, and measurable.

That is where Azure AI Foundry becomes strategically important. Microsoft describes Foundry as a unified Azure platform for enterprise AI operations, model builders, and application development, bringing together agents, models, and tools with built-in tracing, monitoring, evaluations, and enterprise controls such as RBAC, networking, and policies. In executive terms, that means a single foundation for moving AI from experimentation to repeatable business value.

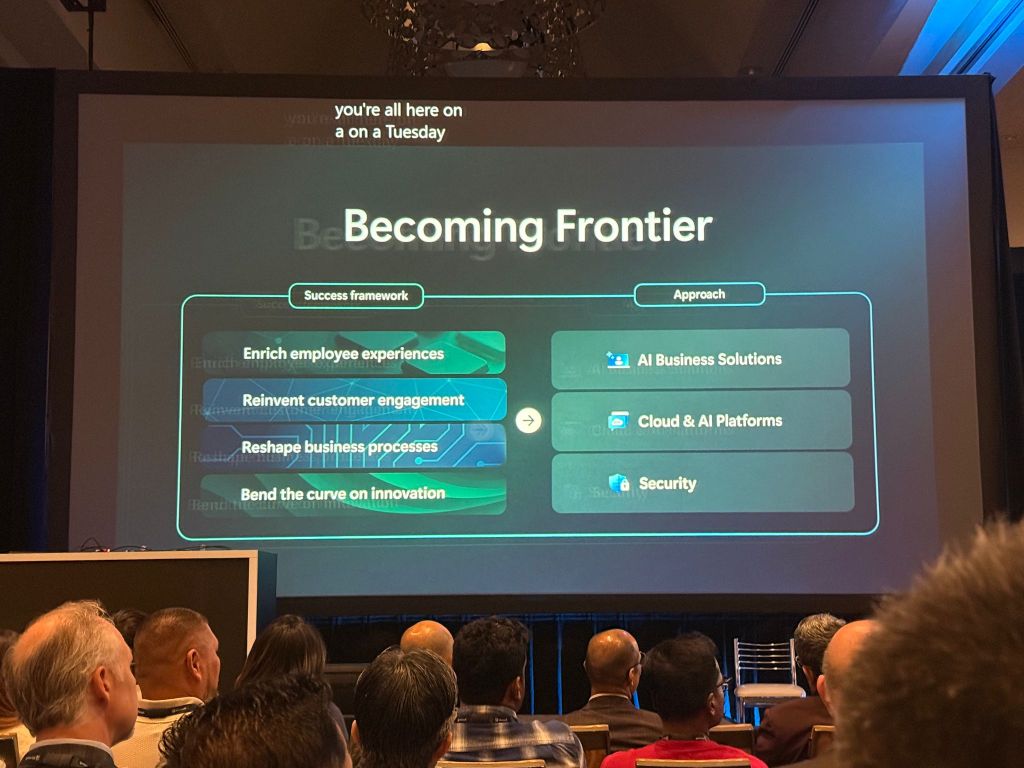

AI democratization does not mean letting every team run disconnected experiments. It means making AI accessible across functions, while preserving the guardrails leaders care about most: security, compliance, reliability, cost control, and trust. It is the difference between “some people are using AI” and “our company is building an AI operating model.” Microsoft’s own adoption guidance frames this journey in stages, from early pilots, to grounding AI with enterprise data, to building intelligent agents and workflows, and ultimately scaling with enterprise observability, governance, and production controls.

This matters because most executive teams are no longer asking for another proof of concept. They are asking tougher questions. How do we make AI usable across HR, operations, finance, service, and customer experience? How do we avoid fragmented tooling? How do we move quickly without creating unmanaged risk? How do we ensure AI is helping our workforce do better work, not simply creating more noise?

Azure AI Foundry answers those questions by giving organizations a common layer for model access, orchestration, evaluation, and governance. It supports a broad catalog of foundation models from Microsoft and third-party providers, and it offers serverless model access so teams can use leading models without provisioning and managing their own GPU infrastructure. That lowers the barrier to entry for business teams while still allowing IT and architecture leaders to maintain control over standards and deployment patterns.

The executive opportunity is clear: democratize access, centralize governance, and industrialize adoption.

Consider what that looks like in practice.

At AUDI AG, the need was not abstract innovation. It was a practical employee experience challenge: how to give workers faster access to answers without expanding support overhead. Using Azure AI Foundry and related Azure services, Audi deployed its first AI-powered assistant in just two weeks and then moved to scale the same framework across additional agents. The lesson for executives is powerful: when the platform foundation is ready, AI moves from months of setup to weeks of business delivery.

At Baringa, the challenge was knowledge work productivity. The firm used Azure AI Foundry and Azure OpenAI to build an internal generative AI platform that accelerated document drafting by 50 percent, with time savings of up to three days per document. This is a strong example of AI democratization because it takes a capability once reserved for technical specialists and embeds it directly into the daily workflow of consultants and delivery teams.

At Hughes, Azure AI Foundry was used to build 12 production applications, including automated sales call auditing and field service process support. Microsoft reports a 90 percent reduction in sales call audit costs and productivity gains of up to 25 percent. That is what democratization looks like when AI is not confined to a lab, but applied across frontline operations.

In healthcare, the story becomes even more compelling. healow manages more than 50 million patient communications for customers and used Azure OpenAI in Azure AI Foundry Models to power a secure, real-time contact center experience. For executives, the takeaway is not just automation. It is that AI can be democratized even in highly sensitive, regulated environments when security and compliance are designed into the platform from the start.

And in enterprise operations, NTT DATA has used the Microsoft AI ecosystem, including Azure AI Foundry, to launch agentic AI services with up to 65 percent automation in IT service desks and up to 100 percent automation in some order workflows. This shows where the conversation is heading next: from copilots that assist, to agents that execute.

So what should executives do now?

First, stop treating AI as a collection of isolated use cases. Start treating it as a capability layer for the business. The most successful organizations do not scale AI one department at a time with separate tools, policies, and vendors. They create a reusable platform and a clear adoption motion.

Second, begin with high-friction workflows where speed, consistency, and knowledge access matter. Internal assistants, service desks, document creation, customer service, compliance reviews, and knowledge search are often the right opening moves. These are areas where AI can deliver measurable value quickly while building organizational confidence. Microsoft’s adoption guidance explicitly points to early pilots, then grounding with enterprise data through retrieval-augmented generation, before expanding into more autonomous workflows and enterprise-wide scale.

Third, ground AI in enterprise context. Generic AI can impress in demos. Grounded AI creates business value. Microsoft’s Foundry adoption guidance highlights the move from early pilots to attaching enterprise knowledge, documents, and internal data so systems become more relevant, accurate, and useful for real work. This is the pivot from novelty to trust.

Fourth, govern from day one. Foundry’s responsible AI guidance emphasizes end-to-end security, observability, and governance with controls and checkpoints throughout the agent lifecycle. Executives should view this not as a brake on innovation, but as the reason innovation can scale safely. Democratization without governance creates shadow AI. Democratization with governance creates enterprise leverage.

Finally, measure success in business language. Not prompts written. Not pilots launched. Measure time saved, cycle time reduced, service quality improved, employee capacity unlocked, compliance strengthened, and revenue enabled. The organizations moving ahead are not simply adopting AI tools. They are redesigning how work gets done.

That is the real promise of Azure AI democratization.

It is not about making everyone a data scientist or an AI engineer. It is about making intelligence, automation, and decision support available across the enterprise in a controlled and scalable way. It is about giving every function access to the power of AI, without forcing every function to become an AI platform team.