One of the most valuable parts of being engaged in the Microsoft community is the opportunity to share real-world feedback that helps improve products, content, and community experiences. My contribution is not limited to learning and sharing knowledge. I also actively provide feedback to Microsoft based on what I see from customers, partners, community members, architects, developers, and business leaders.

Over the years, I have provided product feedback and content improvement suggestions through several Microsoft community and partner engagement channels, including Microsoft Fabric conferences, MVP PGI Connects, and Microsoft AI Tour for Partners.

Feedback Through Microsoft Fabric Conferences

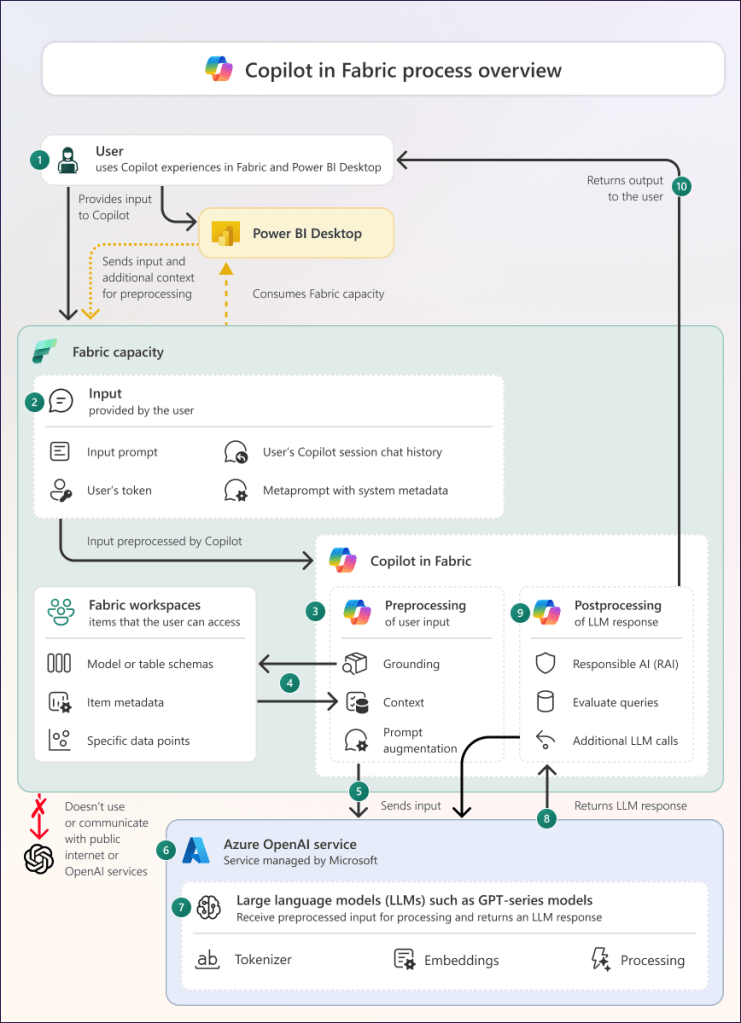

Microsoft Fabric is a major transformation in the data and analytics ecosystem. Through Fabric-related conferences and sessions, I have shared feedback on how customers and partners understand Fabric adoption, architecture, governance, data engineering, Power BI integration, security, migration patterns, and enterprise readiness.

My feedback often focuses on practical adoption challenges, such as:

- How Fabric messaging can be made clearer for enterprise decision-makers

- How architecture patterns can be explained more effectively for data teams

- How governance, lineage, and security guidance can be strengthened

- How content can better address real-world migration scenarios from legacy platforms

- How partners can better position Fabric value to customers

This feedback is shaped by real conversations with organizations that are evaluating or adopting Microsoft Fabric. My goal is to help Microsoft improve how Fabric is explained, adopted, and implemented across different industries.

Feedback Through MVP PGI Connects

MVP PGI Connects provide an important platform for direct engagement between MVPs and Microsoft product groups. Through these sessions, I have shared technical feedback, adoption insights, and content improvement suggestions based on community needs and enterprise customer scenarios.

These conversations are valuable because MVPs bring field-level experience from the community. I use these opportunities to highlight what users are asking, where technical content may need more clarity, and what product guidance would help architects, developers, and business leaders make better decisions.

My feedback includes areas such as Azure AI, Microsoft Fabric, data architecture, responsible AI, enterprise governance, and solution design patterns.

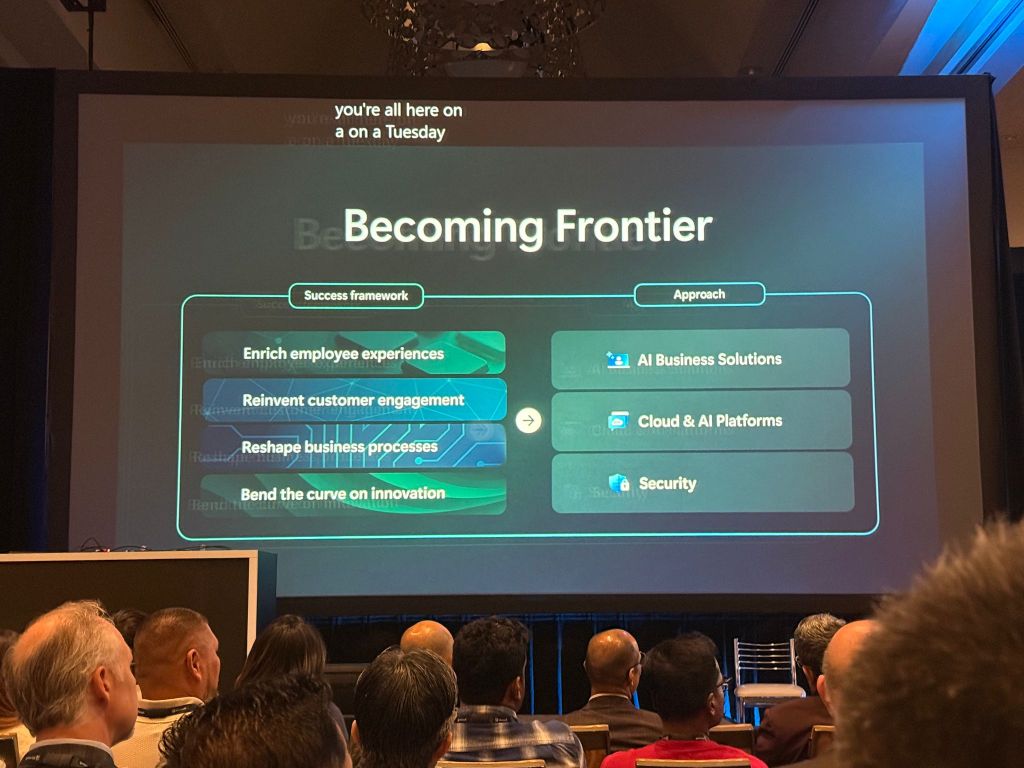

Feedback Through Microsoft AI Tour for Partners

The Microsoft AI Tour for Partners has also been an important channel for sharing feedback on AI adoption, partner enablement, and content readiness. As AI becomes a priority for every organization, partners need clear, practical, and business-aligned guidance to help customers move from AI experimentation to production.

Through these engagements, I have provided feedback on:

- How AI content can better connect technical capabilities with business outcomes

- How partner enablement materials can be more practical and architecture-focused

- How Azure AI and Azure AI Foundry messaging can be simplified for customers

- How responsible AI, security, and governance should be emphasized early

- How partners can be better equipped with real-world demos, use cases, and adoption playbooks

Why This Feedback Matters

Product feedback is powerful because it helps close the gap between product innovation and real-world adoption. Microsoft is building powerful platforms across Azure, Fabric, and AI, but the success of these technologies depends on how clearly they are understood, adopted, and implemented by customers and partners.

By sharing feedback from the field, I help amplify the voice of the community and bring practical insights back to Microsoft. This includes what is working well, what needs more clarity, and where additional content, demos, architecture guidance, or product improvements could create more value.

Final Thought

Yes, I have provided product feedback and content improvement suggestions to Microsoft through Fabric conferences, MVP PGI Connects, and Microsoft AI Tour for Partners. My feedback is grounded in real-world customer conversations, partner enablement needs, and community learning experiences.

For me, this is an important part of being a Microsoft community contributor. It allows me to not only share Microsoft innovation with the community, but also bring community insights back to Microsoft so products, content, and adoption guidance continue to improve.