Problem statement

To create a Data Warehouse (DW) and Data Mart (DM) for multiple categories of market prices, to support queries of multiple pricing information from source data location(Oracle). Overall, this data is needed for different departments of the business to analyze the data wrt pricing information.

Difficulty that business is facing with current available solution

The data from the source location is not easily extracted and difficult for the business to report on as the tools are not user-friendly or inefficient to gather data when multiple curves as required for the business. The present data source is not designed to pull large amounts of data for analytical purposes and the business is currently pulling the data from source manually.

Which solution does the business looking for overcoming present difficulties

Looking for a cloud solution that will implement a self-serve model/process for accessing the complete data, that is simple to use, provides flexibility in gathering large amounts of data with best performance.

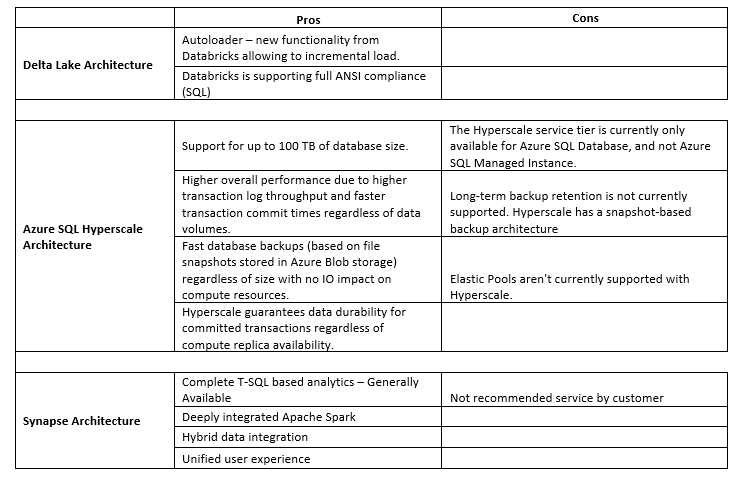

Proposed Architectures to the business

- Delta Lake Architecture

- SQL Hyperscale

- Azure Synapse Analytics

Approved Architecture and proposed solution

- Delta Lake Architecture

Delta Lake provides ACID transactions, scalable metadata handling, and unifies streaming and batch data processing. Access/revert to earlier versions of data for audits, rollbacks, or reproduce through Time travel and DML Operations such as SQL, Scala/Java and Python APIs to merge, update and delete datasets can be performed.

Azure Databricks is an Apache Spark-based analytics platform optimized for the Microsoft Azure cloud services platform. Industry leading Spark (Databricks Runtime) built on a highly optimized version of Apache Spark offering 50x performance.

- Well Architecture Framework

- Cost Optimization

- Effective for extremely large datasets

- Low latency and reliability

Because of these salient features Delta Lake Architecture is chosen for the business.

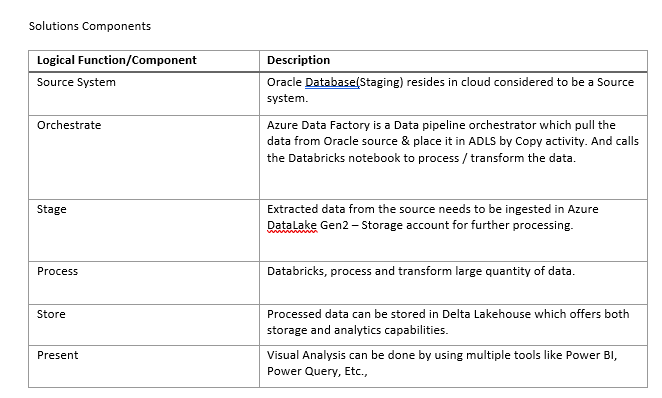

The logical architecture reflects data movement from source systems, transformation through the data platform and load the data in Delta Lake for future reports or Dashboards.

How did we achieved the required solution for the business using approved architecture

Integrating the Oracle System of the business providing the 5 day rolling data to Azure Global container where all the historic and incremental data to be transformed.

This data is transformed using Databricks notebooks by creating staging layers such as Bronze which holds the raw data from Global container then after applying user defined transformation into gold layer. These final delta tables are used for analysis purposes.